De l'étude de contraintes temporelles pour l'alignement de voix par réseaux profonds.

Yann Teytaut a réalisé sa thèse "De l'étude de contraintes temporelles pour l'alignement de voix par réseaux profonds" au sein de l'équipe Analyse et synthèse des sons du Laboratoire STMS (Ircam-Sorbonne Université-CNRS-Ministère de la Culture) et de l'École doctorale Informatique, télécommunications et électronique de Paris. Son travail de recherche a été financé par le projet ANR ARS ( http://ars.ircam.fr/ ).

Yann Teytaut a réalisé sa thèse "De l'étude de contraintes temporelles pour l'alignement de voix par réseaux profonds" au sein de l'équipe Analyse et synthèse des sons du Laboratoire STMS (Ircam-Sorbonne Université-CNRS-Ministère de la Culture) et de l'École doctorale Informatique, télécommunications et électronique de Paris. Son travail de recherche a été financé par le projet ANR ARS ( http://ars.ircam.fr/ ).

Il vous convie à sa soutenance de thèse à l'Ircam le vendredi 7 juillet à 9h30 ou à le suivre en direct par le lien de la chaîne YouTube de l'Ircam : https://youtube.com/live/O5RWUl_vZ9M . La présentation se fera en anglais.

Son Jury sera composé de :

- Pr. Gaël Richard, Professeur, Télécom Paris — Relecteur

- Dr. Emmanouil Benetos, Maître de conférences, Queen Mary University of London (QMUL) — Relecteur

- Pr. Jean-Pierre Briot, Directeur de recherche, LIP6 (CNRS/SU) — Examinateur

- Dr. Emmanuel Vincent, Directeur de recherche, Inria Nancy-Grand Est — Examinateur

- Dr. Rachel Bittner, Manager de recherche, Spotify Inc. — Examinatrice

- Dr. Romain Hennequin, Chef de la recherche, Deezer — Examinateur

- Dr. Chitralekha Gupta, Chargée de recherche, National University of Singapore (NUS) — Examinatrice

- Dr. Axel Roebel, Directeur de recherche, Ircam — Directeur de thèse

Résumé

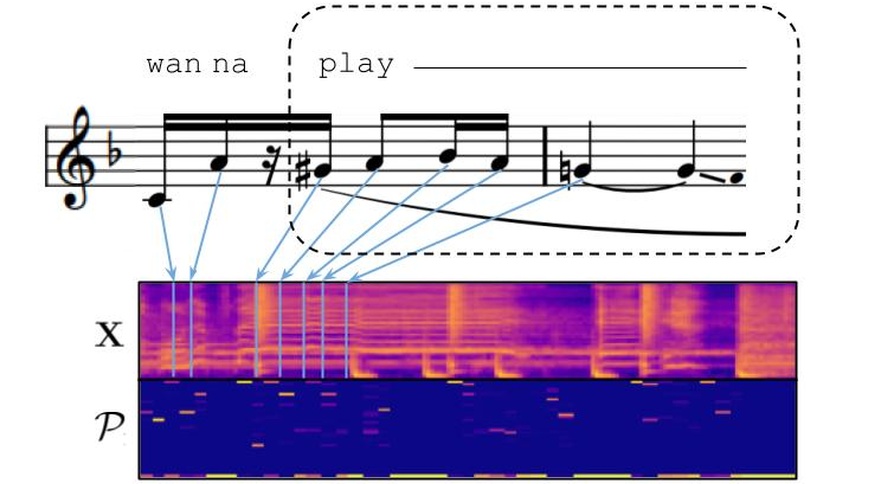

S'écouter, se répondre, faire se coïncider, se coordonner, s’accorder, se suivre, s’adapter, être à l’unisson, se synchroniser, s’aligner... Le riche vocabulaire dédié à la mise en correspondance dans le temps des activités humaines montre l’importance que revêt leur organisation temporelle. La communication humaine, multi-modale par nature, est pleinement concernée par cette problématique puisqu’il existe un écart sémantique entre les locutions orales et leurs séquences symboliques : comment bien interpréter un message écrit sans l’intonation vocale ? quel style performatif au-delà d’une partition musicale figée ? Cette thèse se propose de révéler et expliquer les complexes relations entre les domaines audio et symbolique afin de réduire cet écart grâce à l’étude fine de l’inhérente temporalité contenue dans les enregistrements vocaux. Au coeur de cet objectif, se trouve la tâche d’alignement de voix qui vise à déterminer l’occurrence temporelle de symboles supposés présents dans un signal vocal. Ces travaux s’intéressent tout particulièrement au développement d’un modèle acoustique, ADAGIO, capable d’estimer de tels liens temps-symboles. Les récents progrès en apprentissage profond amènent à implémenter ADAGIO sous la forme d’un réseau de neurones profond dans un puissant formalisme générique : la “Classification Temporelle Connectioniste” (CTC). Cependant, la grande flexibilité offerte par la CTC est mise en défaut par son absence intrinsèque de garanties de prédictions temporellement précises. Les contributions clefs de cette recherche visent à renforcer la CTC par des contraintes temporelles supplémentaires pour améliorer la qualité des alignements déduits. Pour cela, trois tâches annexes de (1) reconstruction du contenu spectral, (2) propagation de la structure audio, et (3) monotonie guidée sont introduites et induisent un impact positif sur l’alignement entre voix, textes, et notes. Dès lors, ADAGIO contribue à de nombreuses applications pratiques au travers de collaborations telles que la synthèse vocale concaténative ou l’étude des stratégies de production expressives en jeu tant pour les attitudes sociales dans la parole que pour le style de chant dans des performances musicales.