Spat

Le Spatialisateur (communément appelé Spat) est un outil dédié au traitement de spatialisation sonore en temps réel. Il permet aux artistes, designers sonores, ingénieurs du son, de contrôler la position de sources sonores dans un environnement tri-dimensionnel: à partir de signaux issus de sources instrumentales ou de synthèse (électronique), le Spat réalise les effets spatiaux et délivre les signaux alimentant un dispositif électroacoustique (qu’il s’agisse d’un ensemble de haut-parleurs ou au casque).

Conçu de manière modulaire, le Spatialisateur permet à l’utilisateur de spécifier/automatiser les paramètres de spatialisation indépendamment du mode de restitution et du type de dispositif électroacoustique choisis. Ainsi le travail de spatialisation peut s’effectuer dès l’étape de composition sur un dispositif de reproduction élémentaire (reproduction binaurale sur casque) et être directement réutilisé ou moyennant des ajustements mineurs sur les dispositifs plus ambitieux tels que requis par les installations sonores interactives ou les situations de concert.

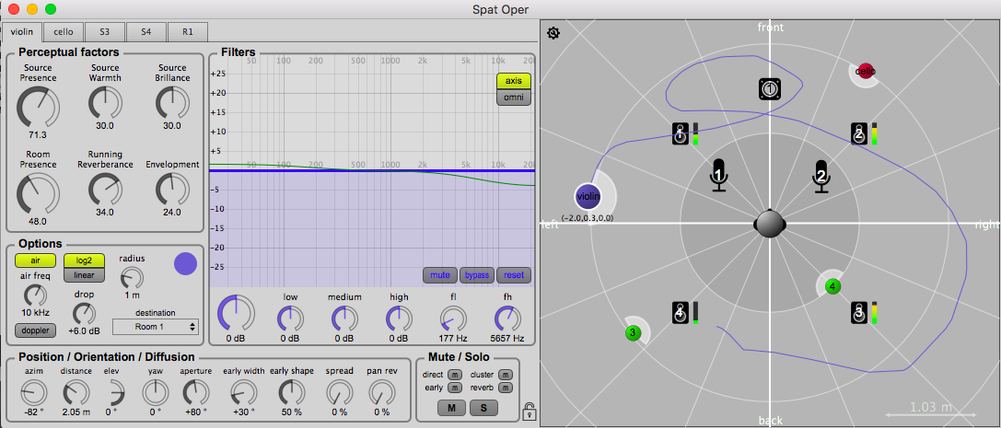

L’originalité du Spatialisateur réside également dans son mode de contrôle de l’effet de salle à l’aide de critères perceptifs qui permettent à l’utilisateur de spécifier de manière intuitive les caractéristiques sonores de la salle sans recourir au vocabulaire acoustique ou architectural.

Fonctions principales

Le Spat est basé sur une puissante bibliothèque de traitement du signal écrite en langage C++. La suite logicielle du Spat se présente sous la forme d’un ensemble de modules insérables dans l’environnement Max/MSP (objets externes Max). Le Spat contient plus de 250 objets externes, un grand nombre de patches (abstractions), de patches d’aide, de tutoriaux, une large base de données de HRTFs, etc. Les modules du Spat sont paramétrables, et la plupart d’entre eux permettent de traiter jusqu’à 8192 canaux d'entrées/sorties.

Les fonctionnalités principales sont les suivantes:

- spatialisation des sons (panning) en 2D ou 3D. Notamment: stéréo (AB, XY, MS), rendu binaural au casque (avec compensation des effets de champ proche) et transaural sur haut-parleurs, vector-base amplitude panning (VBAP), vector-base intensity panning (VBIP), distance-based amplitude panning (DBAP), nearest-neighbor amplitude panning (KNN), speaker-placement correction amplitude panning (SPCAP), B-format et higher order Ambisonics (HOA) sans restriction sur l’ordre d’encodage/décodage, Ambisonics aux ordres supérieurs avec compensation des effets de champ proche (NFC-HOA), wavefield synthesis (WFS), layer based amplitude panning (LBAP), etc.

- réverbération artificielle. Réverbération multicanale, scalable et paramétrable basée sur un réseau de retards rebouclés. Convolution multicanale temps-réel, sans latence.

- contrôle perceptif de la qualité acoustique de l’effet de salle: chaleur et brillance; présence/proximité de la source; présence de la salle; réverbérance précoce et tardive; intimité, vivacité. Contrôle simplifié du rayonnement des sources (ouverture et orientation).

- traitement du signal de bas niveau: égalisation, effet Doppler, absorption de l’air, etc.

- interface graphique de contrôle/édition/visualisation de scènes sonores spatiales.

- Nombreux objets pour créer, manipuler, transformer des trajectoires spatiales.

- Nombreux outils pour manipuler des signaux audio multicanaux: lecture et écriture de fichiers multicanaux (spat.sfplay~, spat.sfrecord~) jusqu’à 250 canaux; égaliseur, compresseur, limiteur multicanaux.

- outils de mesure acoustique de réponse de salle et de calibration de dispositifs électroacoustiques: mesure et alignement en délai et niveau; mesure, analyse et débruitage de réponses impulsionnelles de salle. Monitoring sur casque de flux multicanaux.

- divers effets audio: élargissement stéréo, simulation de cabine de Leslie, délai ping pong, égaliseur graphique, égaliseur paramétrique, etc.

- entièrement contrôlable via OSC.

- import/export/rendu temps-réel de contenus audio orientés objet, selon le standard ADM (Audio Definition Model).

Disponible sous MacOS et Windows.

Domaines d'application

- Composition

- Film et Musique

- Post-production

- Recherche et développement

- Réalité virtuelle

- Design sonore

Le Spat est utilisé dans de nombreux contextes:

- Concerts, installations sonores et spatialisation en temps réel. Le compositeur peut associer à chaque évènement musical ou chaque élément sonore de la partition une position particulière dans l’environnement spatial. Le Spat peut être contrôlé depuis un séquenceur logiciel, asservi à un suivi de partition, ou encore paramétré par une approche algorithmique. Etant intégré à l’environnement Max/MSP, le Spat peut être piloté très facilement par des capteurs/contrôleurs externes (dispositifs de suivi de position, tablette, smartphone, joystick, capteurs gestuels, etc.).

- Mixage et post-production. Un module de spatialisation peut être associé à chaque tranche d’une table de mixage.

- Réalité virtuelle. La composante auditive spatiale joue un rôle prépondérant dans la sensation de présence et d’immersion dans les applications de réalité virtuelle ou dans les installations interactives. Pour ces applications, le rendu binaural du Spat (restitution 3D sur casque) est particulièrement approprié. Ce rendu binaural est d’autant plus convaincant si le moteur est asservi à un dispositif de suivi de position (suivi de la position et/ou de l’orientation du sujet) ou de contrôle gestuel.

Références

- T. Carpentier, M. Noisternig, O. Warusfel. Twenty years of Ircam Spat : looking back, looking forward. In Proc of 41st International Computer Music Conference (ICMC), Denton, TX, USA, pp 270 – 277, Sept 2015.

- T. Carpentier. Récents développements du Spatialisateur. In Proc of Journées d’Informatique Musicale (JIM), Montréal, May 2015.

- T. Carpentier. Une nouvelle implémentation du Spatialisateur dans Max. In Proc Journées d’Informatique Musicale (JIM), May 2018, Amiens, France. 2018.

- T. Carpentier. A new implementation of Spat in Max. In Proc of the 15th Sound & Music Computing Conference (SMC), pp 184 – 191, Limassol, Cyprus, July 2018